I want to write a little program that takes two sound files and plays them at the same time, but which adds the position of the mouse's scroll wheel to one of the playback positions so that you

can easily twiddle the synchronization between them. I'm not sure that this would be particularly useful for anything, but I think it would be fun.

Archive for August, 2011

Sound synchronizer

Sunday, August 21st, 2011Preventing cheating in online games

Saturday, August 20th, 2011In computer security, there is a general rule which says that you should never trust anything sent to your server by client software running on a user's machine. No matter how many cryptographic checks and anti-tampering mechanisms you put into your code, you can never be sure that it's not running on an emulated machine over which the user has complete control, and any bits could be changed at any time to give the server an answer it accepts.

This a problem for online gaming, though, as cheaters can give themselves all sorts of capabilities that the game designer did not plan for. This (apparently - I am not much of a gamer) reduces the enjoyment of non-cheating players.

However, games do have one advantage here - they generally push the hardware to (something approximating) its limits, which means that running the entire game under emulation may not be possible.

So, what games can do is have the server transmit a small piece of code to the client which runs in the same process as the game, performs various checks and sends the results to the server so it can determine if the user is cheating or not. The Cisco Secure Desktop VPN software apparently uses this technique (which is how I came to think about it). I have heard this small piece of code referred to as a "trojan" in this context, although this terminology seems misleading because this particular kind of trojan doesn't run without the users knowledge and consent, and is only malicious in the sense that it doesn't trust the user (the same sort of maliciousness as DRM, which is not quite as bad as illegal malware).

The trojan for an online game could send things which are very computationally intensive to compute (such as the results of the GPU's rendering of the game). Because the server can keep track of time, doing these computations in anything less than real time would not suffice. To avoid too much load on the server, the computations would have to be things that are easier to verify correct than to compute in the first place (otherwise the server farm would need to have a gaming-class computer for every player, just to verify the results). And to avoid too much load on the client, it should be something that the game was going to compute anyway. I'm not quite sure how to reconcile these two requirements, but I think it should be possible.

The system should be tuned such that the fastest generally available computer would not be powerful enough to emulate the slowest computer that would be allowed to run the game. Depending on the pace of progress of computer technology and the lifespan of the game, it might eventually be necessary to change these requirements and force the users of the slowest computers to upgrade their hardware if they want to continue playing the game. While this would be frustrating for these players, I don't have a problem with it as long as there is a contract between the players and the game company that both agree to and are bound by - it would be part of the cost of playing without cheaters. Though I would hope that independent servers without these restrictions would also be available if there is demand for them.

Special relativity game

Friday, August 19th, 2011I think it would be awesome if somebody made a 3D, first person computer game where the speed of light was significantly slower (perhaps 30mph as in the Mr Tompkins books) and did relativistically correct rendering so that you could see the geometric distortions and Doppler shifts as you walked around. It might be necessary to map the visible spectrum onto the full electromagnetic spectrum in order continue to be able to continue to see everything (albeit with reddish or bluish hues) when you're moving quickly.

It would have to be a single player game because (in the absence of time travel) there is no way to simulate the time dilation that would occur between players moving around at different speeds.

It appears that I'm not the first person to have this idea, though the game mentioned there (Relativity) doesn't seem to be quite what I'm looking for.

I do realize that it might be quite difficult to do this with current graphics engines, but I'm sure it could be done in real time, perhaps with the aid of suitable vertex and pixel shaders for the geometric/chromatic distortions respectively.

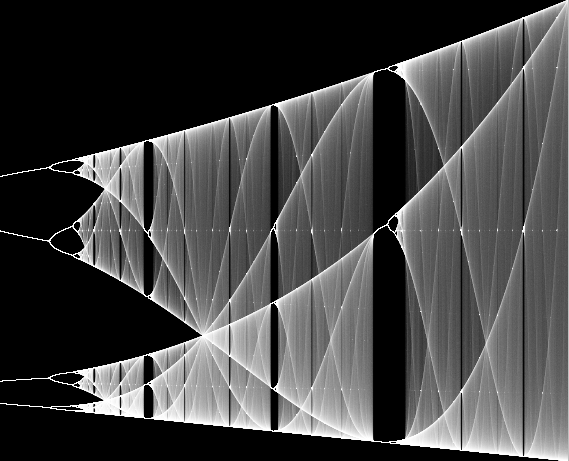

Bifurcation fractal

Thursday, August 18th, 2011I wrote a little program to plot the Bifurcation fractal when I was learning to write Windows programs from scratch - this is an updated version of it. Unlike most renderings of this fractal, it uses an exposure function to get smooth gradients and scatters the horizontal coordinate around the window so you get both progressively improvements in image quality and can see very fine details (such as the horizontal lines of miniature versions of the entire picture).

You can zoom in by dragging a rectangle over the image with the left mouse button, and zoom out by dragging a rectangle with the right mouse button.

Checksum utility

Wednesday, August 17th, 2011I couldn't find a good CRC32 checksum utility that properly handled wildcards, so I wrote my own.

I actually first wrote this a very long time ago using DJGPP. Actually, thinking about it it might have been the first 32-bit program I ever wrote. That compiler made it very easy because the command-line handling did the directory recursion for me (though it did mean you had to use the awkward syntax "crc32 ...\*" instead of "crc32 *" or "crc32 .". However, 64-bit Windows won't run DOS programs so it needed a rewrite, and once I had completed the filesystem routines I mentioned before it was just as easy.

This program is very handy for comparing contents of large directories across machines ("windiff -T" would copy everything across the network so would take forever.) Just run it on the two directories locally and compare the output.

I used to use this for weekly backups of my machine. It's nice to see exactly what's changed since the last backup so I can delete the backup files made by my text editor and anything else I didn't really mean to keep. Nowadays I use rsync to a Linux machine which amounts to something very similar but more automatically.

Audio codec idea

Tuesday, August 16th, 2011I was writing some audio code recently and it gave me an idea for an audio compression strategy. I don't know enough about audio compression to know for sure if it's been done before, but here goes anyway. The idea is that you have a selection of short, repeating waveforms (e.g. square wave, sine wave, sawtooth wave, white noise) and multiple channels each of which can independently play one of these waveforms at some particular frequency and volume. This is almost exactly the way that my physical tone matrix renders audio.

I was thinking that with enough channels, enough possible waveforms to choose from, enough frequencies, enough volumes and changing the parameters quickly enough, one could make a (perceptually) good approximation to any input waveform. And because the model sort of reflects how most of the things we listen to are created in the first place (a piece of music consists of multiple instruments playing at different volumes and pitches but each generally consisting of a fundamental tone with overtones of various intensities which might change throughout the course of any given note) this might actually give a pretty good approximation in a very small space.

One big problem is how to choose the right pitch, volume and waveform for each channel. The first thing I tried was using a genetic algorithm - the set of waveforms and the pitch/volume/channel information being the "genome" and the rendered waveform being the "phenome". The fitness function is just mean squared error (though eventually I'd like to switch to a more sophisticated psycho-acoustic model like that used by MP3 encoders). There is a population of genomes and the best ones survive, mutate and recombine to make the new population. Recomputing the phenome is not too expensive as the computations are very easy and each pitch/volume/waveform datum only affects a small amount of the phenome. Changing a bit in one of the waveforms is more expensive though as you have to go through all the pieces of the phenome that use that waveform.

Unfortunately it doesn't seem to be converging on the input waveform at all yet (it probably would if I left it long enough, it's just far too slow). The next thing I want to try is seeding the initial genomes with some kind of reasonable approximation to the initial waveform, and seeding the waveforms themselves with some random set of overtones modulated by a range of power laws. To seed the initial genomes, I'll need to break the input waveform into pieces, do an FFT on each piece to find the frequencies, pick the best waveform/pitch/frequency combination for that piece, then subtract it and repeat until we've picked a waveform for each channel.

Even if this codec doesn't beat existing codecs on quality per bitrate metrics, it could still be extremely useful because very little CPU power is required to play it back (I've already proved that a 16MHz Atmel AVR ATMega32 can do 16 channels of this kind of rendering in real time at 16KHz using less than half its capacity). If you premultiply each waveform by each possible volume you can playback on hardware that doesn't even have a multiplier (at significant cost to quality and/or bitrate).

Another fun thing about this system is that you could train it on a large number of sounds at once and come up with a set of waveforms that are good for compressing lots of different things. These could then be baked into the encoder and decoder instead of being transmitted with the rest of the data, leading to further savings. I wonder what the optimal waveforms would sound like.

This idea owes something to Mahoney's c64mp3 and cubase64 demos (unfortunately Mahoney's site breaks inbound links but the cubase64 link is in the 2nd of October 2010 section under "Recent Changes"). However, I think the sound quality of this scheme is likely to be much better than c64mp3 since we're not limited to a one channel plus noise.

Perceptual colour space update

Monday, August 15th, 2011Last year, I wrote about some code I had written to find maximally distributed sets of colours in perceptual colour space. I was having some problems with the code at the time, but since then I have got it working. I fixed it by only repelling each particle from the nearest one to it - then particles quickly settle into the points where they are equidistant from the nearest particles on each side.

Here are the colours it came up with in LUV space:

And here are the colours it came up with in LAB space:

I also did a variation where it just chooses a different hue for each colour, maximizing the saturation (so arranging colours in a ring, rather than throughout a volume) - this is the LAB version but the LUV version is very similar:

I want to do a little flash applet so you can see how the colour particles repel and rotate them around in 3D space - it's a very good way to visualize the perceptual colour solids.

Run a random file

Sunday, August 14th, 2011I have a collection of childrens' TV shows on a computer connected to the family TV. It's a good way to allow the children to watch some TV for a limited period of time without adverts. When one show finishes and they ask me to put another one, I often say "Okay, but you have to wait 20 minutes". Usually in that time they find something else to do and forget about the TV.

Picking what to put on can be a bother though - if I try to play them in order I forget which one I put on last, and if I try to pick one at random I'll invariably pick some more often than others. So I wrote run_random (binary). This program takes its arguments, expands wildcards and recurses directories to obtain a list of files and then picks one at random and calls ShellExecute() on it (which, if it's a video file, will play the video).

It's a handy little utility but it's also a good testcase for some path and directory handling routines I wrote for various other purposes.

Oscilloscope waveform rendering

Saturday, August 13th, 2011One thing that always annoyed me about Cool Edit Pro (now Adobe Audition which seems to be much more annoying to use in several respects) is the quality of the waveform visualization. What it seems to do is find the highest and lowest signals at each horizontal pixel and draw a vertical line between them (interpolating as necessary if you're zoomed way in). That means that when you're zoomed, the waveform is a big blob of green with very little useful detail in it. Only green and black pixels are used - no intermediate colours are used to smooth the image. Other waveform editors I've tried seem to work in similar ways.

I think we should be able to do much better. Suppose we rendered the waveform at at an extremely high resolution (one pixel per sample horizontally or better) and then downsampled it to our window size. There's a problem with doing it that way, though - unless the waveform only covers a few pixels vertically, the waveform is going to be spread out amongst too many pixels and will be very dark. Imagine an analog oscilloscope with the beam intensity set at normal for a horizontal trace and then visualizing a signal which oscillates over the entire display at high frequency - most of the signal will be invisible with the exception of the peaks.

The solution to this with the analog oscilloscope is to increase the beam intensity. We can do exactly the same thing with a digital visualizer too - we're not limited to 100% intensity for our intermediate calculations (if a pixel ends up at more than 100% in the final image, we can clamp it or use an exposure function). Increasing the intensity to infinity gives us the Cool Edit Pro visualization again - the any pixel the waveform passes through is fully lit.

What does it look like? Watch this space to find out!

Edit 14th July 2013:

Cleartype for images

Friday, August 12th, 2011It occurs to me that one could use similar sub-pixel techniques used by ClearType to improve the resolution of graphics as well as text. One way to do this would be to downsample an image using a kernel with different horizontal phases for red, green and blue. However, this wouldn't take into account the fact that when making one pixel red, you need to make a nearby one cyan to avoid annoying fringes. With text, the geometry can be controlled so that the widths of horizontal spans are always a multiple of 3 subpixels, but if you're starting with a bitmap you can't really adjust the geometry. Perhaps it wouldn't matter in practice: the same effect happens with NTSC television signals - if you get a black and white pattern with horizontal frequency components in the chroma band you'll get colour fringes for exactly the same reason, but you usually don't notice it because for most images it evens out on average.

I'll have to do some experiments and see what happens.